Adobe has unveiled its cutting-edge generative fill feature named “Project Fast Fill”, leveraging the prowess of artificial intelligence to seamlessly add or subtract objects within videos. This exciting AI-driven feature was amongst the many groundbreaking announcements made at Adobe’s MAX conference. What’s captivating about Project Fast Fill is its ability to interchange clothing accessories on moving individuals or even erase tourists from a panoramic landscape view.

Its functionality is reminiscent of Google’s Magic Editor. While the latter manipulates people or objects within photographs, Fast Fill operates within the realm of videos. It aims to achieve what Adobe’s Project Stardust accomplishes for photographs, such as modifying colors based simply on a text input. This marvel of generative AI is powered by Adobe’s refreshed Firefly AI algorithms.

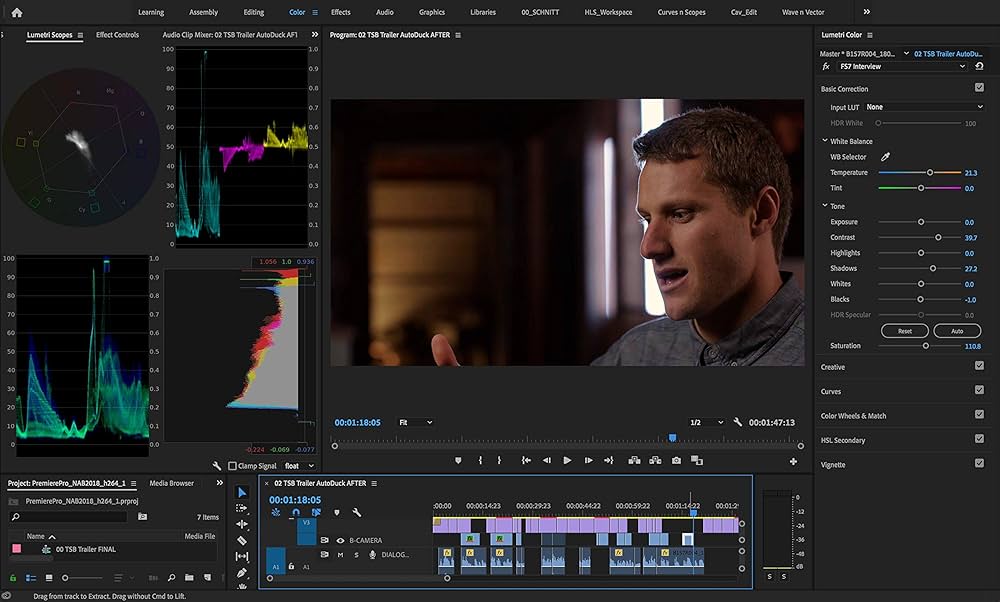

Image: Adobe

However, it’s crucial to note that Project Fast Fill is, at this point, an experimental endeavor by Adobe’s brilliant engineers. But history has shown that such innovative features, as demonstrated in prior Adobe conferences, often find their way to the hands of Creative Cloud users. Adobe envisions this new feature benefiting users of Premiere Pro and After Effects, though no official launch date is set.

But that’s not all. Adobe continues to expand its horizons with AI-driven editing technologies encompassing video, audio, and even 3D design. For instance, “Project Dub Dub Dub” possesses the ability to convert voices into multiple languages. “Scene Change” offers the capability to transition subjects to different scenes. “Res Up” enhances the quality of low-resolution videos, and “Project Poseable” can recreate 3D structures based on real-life human images and generate 3D visualizations from mere text prompts.

With AI-powered photo tools, like those seen in Google Pixel 8’s Magic Editor, becoming increasingly commonplace, the age-old question, “What really constitutes a photograph?” becomes more relevant. And now, with Adobe’s Project Fast Fill on the horizon, this paradigm-shifting capability might soon be accessible to a broader audience.