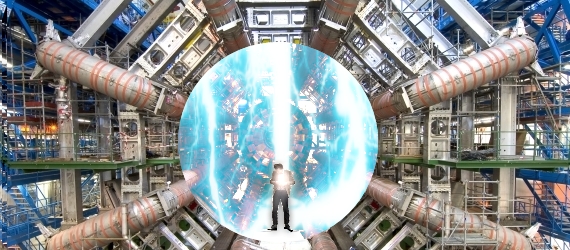

50,000,000 GB of Data Recorded by Large Hadron Collider

We all are well aware of the Large Hadron Collider which physicists and astronomers expect will answer the most basic questions about the universe and how matter came into being. The Large Hadron(LH C)is one of the most powerful particle accelerator ever created. Little do many realize the different components that make LHC a success and one such is the computer grid that supports it.

We all are well aware of the Large Hadron Collider which physicists and astronomers expect will answer the most basic questions about the universe and how matter came into being. The Large Hadron(LH C)is one of the most powerful particle accelerator ever created. Little do many realize the different components that make LHC a success and one such is the computer grid that supports it.

LHC produces huge amounts of data at lighting speeds and had the project gave birth to one of the biggest computer grids on the planet. The output from the collisions that occur in the LHC is fed into the grid to be stored for later analysis.

“LHC uses only 10-15 percent of the total CPU power dedicated to the data”, said CERN’s Wolfgang von Rueden. “LHC produces too much data that we can possibly store, so removing unnecessary data is most important”, he said. LHC has about 2,000 CPUs at each of the four detectors which filter interesting collisions out.

The networks at LHC are extremely fast. The CPU’s help filter the data which reduces it from 1 Petabyte (PB) a second to a GigaByte per second which is transferred from the detectors to the main facility via a dedicated 10Gbps connection. Once the data is transferred it is stored on tape storage. “We have about 50PB of tape storage and is handled by a set of robotic storage hardware”. He said that the disk was almost full and they had recently scaled up to another 20 PB. Mind you 1 Petabyte = 1,000,000 Gigabytes!

Linux plays a lead role in serving the LHC. The disks managed as a cloud service are all controlled by Linux boxes which are fed by 1Gbps connections.

Although loaded with such latest technology, the CERN data center is 35 years old and is now hosting clusters in a space that was built for supercomputers. However many upgrades and improvements were made by CERN to upgrade its aging center to support LHC for another 20 years.

You can read more here.

1 thought on “50,000,000 GB of Data Recorded by Large Hadron Collider”